In 2018 I started experimenting with Unitys ‘DOTS’ (data orientated tech stack). I was failing to get decent performance in standard MonoBehaviour Unity for a experimental RTS project. Fast forward to 2021 with a few years experience of DOTs I’ve decided to switch to native C++. Previous posts on the blog were using Unity DOTs. The following post details my experience going back to a simple & not overly complex subset of C++ (ie. I not using all ‘modern’ C++ features if there’s no good reason too).

Where ‘native’ is used in this post it is referring to writing in a native language, in this case C/C++, as opposed to Unity C#.

Why switch?

Some of the reasons for switching are not really Unity specific as such, but more just general 3rd party engine/middleware things. As previous blog posts showed, I found myself rewriting the navigation (wanted a deterministic, higher performing dynamic 2d planar navmesh), animation (to cope with thousands of characters), map editor (needs to work in standalone and be usable by players) and eventually even the maths and physics (determinism and other reasons).

The breaking point came writing a delaunay triangulation implementation and having to hack quite a bit of C# code with unsafe regions & just being limited in general by the DOTS container types. I did it, and got it working (see previous blog post), but it was a total square peg in a round hole. I didn’t really feel comfortable with the state of the code and it’s maintanability into the future. This really made me re-evaluate what I was doing. Why go to all this effort if I can’t run it in 1 year without even more work, let alone 3 or 6 years later. My gut feeling is that code written in C/C++ is going to require less catch-up work in 2, 5 or 20 years into the future compared with a 3rd party middleware specific language, like C# DOTs (DOTs is effectively like using another language compared to normal C#). I like not having to worry about the future for DOTs or Unity anymore, I like being able to implement features myself more, and most of all I like not worring about middleware going the way of Microsofts XNA (dead).

Experience going back to C/C++

Despite all this, my initial plan was to convert only the simulation code to C++. I would create a plugin and then continue to leverage a 3rd party engine for graphics & other generalist things. However, I started enjoying programming in a native project again so much that I decided to convert all the way, graphics and all. I’m not a C/C++ super-fan, but it’s still a very viable language for game dev. I like straight-forward code that compiles fast and runs quickly. This can be achieved with C/C++ by keeping code simple, well organised and not over-using certain features of the language.

My iteration time with Unity from a single line change in the average gameplay implementation file to the app running was 13 seconds. In the C++ project it’s 0.5-2 seconds. This alone makes working on the project so much more enjoyable and dramatically cuts down on distractions. Obviously the iteration time may vary as the project continues but I don’t see it changing that drastically at this point. Having said all that, I can still see the pros of working with a shared general engine in a larger team or if the engine is a better fit to the project, but it’s not the use-case for this project at the moment.

Native app task list

There was suddenly quite a few things to code. Fortunately I’ve done most of these things before at somepoint so it wasn’t long before I had a equivalent app in C++. And eventually I passed the features of the Unity version (not Unity the engine, obviously! But the things I need in this project). Here is a limited list of the things I implemented in approximate chronological order:

- App input, window setup (using GLFW)

- Basic primitive debug drawing API (OpenGL for now), Camera

- Custom memory allocation setup, persistent or frame/scratch allocators

- Custom container classes (Stretchy array, hashmap, multihashmap) that can take custom allocators & other things to avoid std lib

- Initial heightmap terrain work

- Hierarchical transform system

- Import box2d & glm (I’d like to replace glm later with a leaner, smaller maths lib but for now it’ll do)

- Fix point maths & convert box2d to fixedpoint math

- Delaunay navmesh

- All gameplay systems (pathfinding, boids, spatial partitioning, attacking and lots lots more)

- Tried entt (ECS) then wrote my own simpler & basic entity manager with pools

- Import ImGui, Notifications and setup debug UI

- fbx loading, custom binary model format, instanced mesh rendering, skeletal animation

- Various shaders for terrain, screenspace, skinned mesh & static mesh

- Basic depth-buffer shadows to add some depth to objects in the scene

- Minimalist in-app profiler

- Custom level file format

- Editor work, Translation & rotation gizmos, map save/load, Terrain brushes, prefab palette

- Generic config variable file

- Hot-reloading for the config var file & shaders

- GameDatabase/ResourceManager & very basic prefab workflow stuff

I had done variations of most of these things before, so it was quite quick to get it all done. It was nice to have a firmer target this time round. A mistake I’d made in the past was over-engineering by trying to write a generalist engine, when I didn’t really need one. I focussed on actual requirements and semantically compressed code or tidied up as appropriate afterwards. With all the above in the project I am still able to have an iterative build time of ~1 second. Full rebuild or changing something in a precompiled header can take lots longer, but it’s very rare I need to do that.

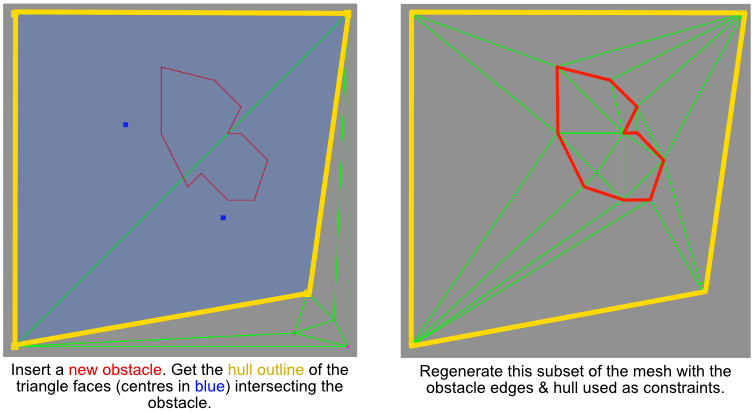

Procedural obstacle problems

In my previous post: RTS Pathfinding 2, I spoke about generating polygon bounds from chasms in the terrain by comparing height deltas of adjacent heightmap cells. Initially, I planned to expand this to other terrain features such as mountains, but when implementing in practice it was quite hard to solve robustly. The images below show problems that come up when I tried to scan height deltas for hills that had ramps leading up to them. In the end I opted for a simpler solution of simply manually placing collider nodes.

Cliff painting

As mentioned above, procedural object generation of terrain features didn’t seem like a good choice anymore. It’s hard to precisely configure the obstacle shapes. There was a lot of noice generated from just using height deltas to draw polygons around mountains/chasms which ultimately meant awkward navmesh/rigid body obstacles were produced. I wanted to be able to control obstacle shapes very well so that I could focus on setting up gameplay/map design just how I wanted it. I didn’t want to worry if a polygon wrapped around a terrain feature correctly.

Lots of RTS games have ramps and cliffs. Cliffs allow for easily setting up mazes and directing the flow of units, & thus tactics across the map. Blizzard RTS games tend to have well defined cliffs. These are essential in a way for the Terran race in Starcraft2 which depends on ‘turtling’ up with strong base defences. Total Annihilation or Supreme Commander style RTS games tend to have lots more open maps but with quite interesting features. I wanted a bit of both but I thought the way Blizzard RTS games like Warcraft3/Starcraft2 maps were setup might provide for more interesting tactics & gameplay, which is why I added a cliff painting tool.

The cliffs are baked into the terrain with a preset height gradient brush. Each cliff covers 5×5 height map cells with strengths applied between a lower and upper cliff height. Ramps can also be painted to allow paths between cliff levels. Ramps are done in the same way but with a smoother elevation gradient. Rigid bodies are automatically generated at cliff boundaries. These will act as physical barriers to units at or below the cliff level, and will also be inserted into the navmesh as obstacles.

The cliff obstacles are 100% predictable allowing for the designer to focus on crafting the map. However, I didn’t want the game world to look too uniform and grid-like. I still wanted more varied terrain features, if only for background visuals, so I added a manual obstacle painting tool to the editor.

Terrain brushes

I added a terrain brush to the editor that allowed basic height altering operations such as stamp, average & invert and then sourced a few textures for it. This allowed me to very quickly paint down complex and interesting terrain around the map with a few clicks.

I painted down some mountainous terrain near the map boundaries and next to base cliffs. This made the cliffs appear to be merged into more natural terrain formations. I was quite happy with the end result which allows endless room for visual variation in the terrain with simple, predictable & 100% controllable obstacles for gameplay.

Gameplay ready map

The focus now was to get a map ready that could be used for unit gameplay experimentation. This really just required obstacles for cliffs, mountains & chasms (navmesh only obstacle with no physical barrier). But it was nice to add some basic graphics in the form of terrain brushes to see these things better. I went ahead and threw together a very basic splatmap terrain shader too, so that I could visualise things a bit better.

I wanted one more map feature before starting with gameplay prototyping, which was prefab obstacles, such as rocks. In a Jonathan Blow stream I had previously seen a very neat looking prefab palette tool. I added a similar tool to my editor which lets me visually select objects to place on the map. I added a few rock meshes to the prefab database and scattered a few around a part of the map.

The map now feels ready to use as a base for some gameplay experimentation. The embedded video for this post shows more of the general C++ tech progress and map editing.